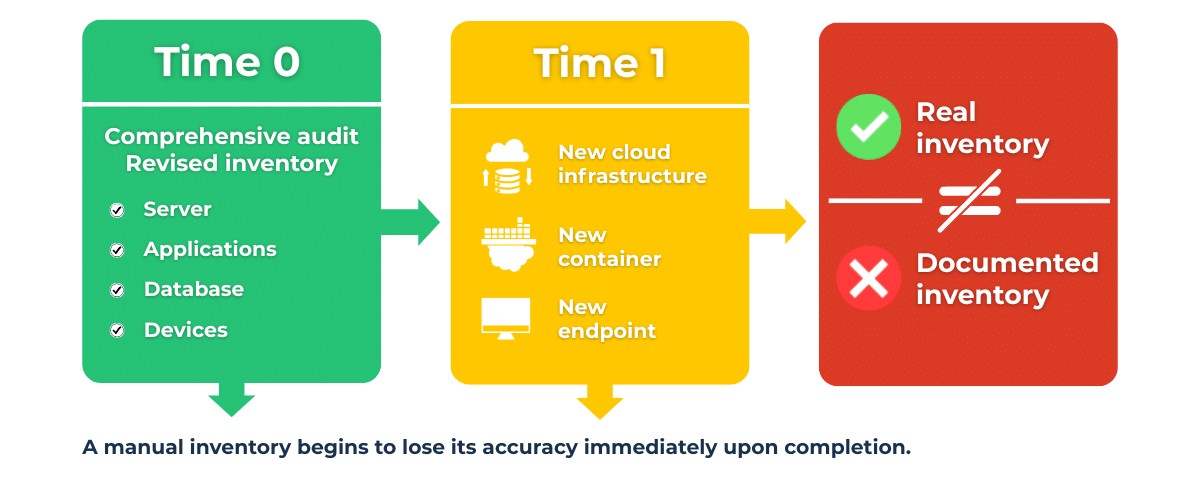

Those who work in IT or cybersecurity are familiar with the unique feeling that comes at the end of an audit or after a thorough infrastructure review. For a few hours, sometimes a few days, it truly feels like you have everything under control.

The asset inventory is updated, the applications are listed, and every component appears properly documented. At that moment, what we might call a brief illusion of completeness is created, the perception of being at the famous “ground zero,” the moment when the infrastructure finally appears fully mapped.

In reality, the very moment the inventory is closed, a new virtual machine can be started, a cloud service activated, or a container created and destroyed in the space of a few minutes. While these changes occur, no one will bother updating the inventory, thus rendering the newly created asset inventory instantly obsolete.

The result is a phenomenon closely resembling the concept of entropy, with data tending to lose order and precision. In the case of asset management, this process is practically immediate, given that digital infrastructures are dynamic environments in which components and relationships evolve in real time.

If maintaining an up-to-date inventory was already a challenge in the past, today the complexity has increased exponentially.

The first element concerns the expansion of the technological perimeter. Corporate infrastructure once consisted primarily of servers in the data center and employee devices; today, an organization’s digital architecture includes cloud environments, containers, and SaaS applications managed by third parties. This means that much of the infrastructure can be created and destroyed extremely quickly.

A Kubernetes cluster, for example, can generate new containers in seconds to handle a traffic spike, removing them immediately thereafter. From an asset management perspective, this introduces a completely new dimension, as some infrastructure components only exist for a few minutes.

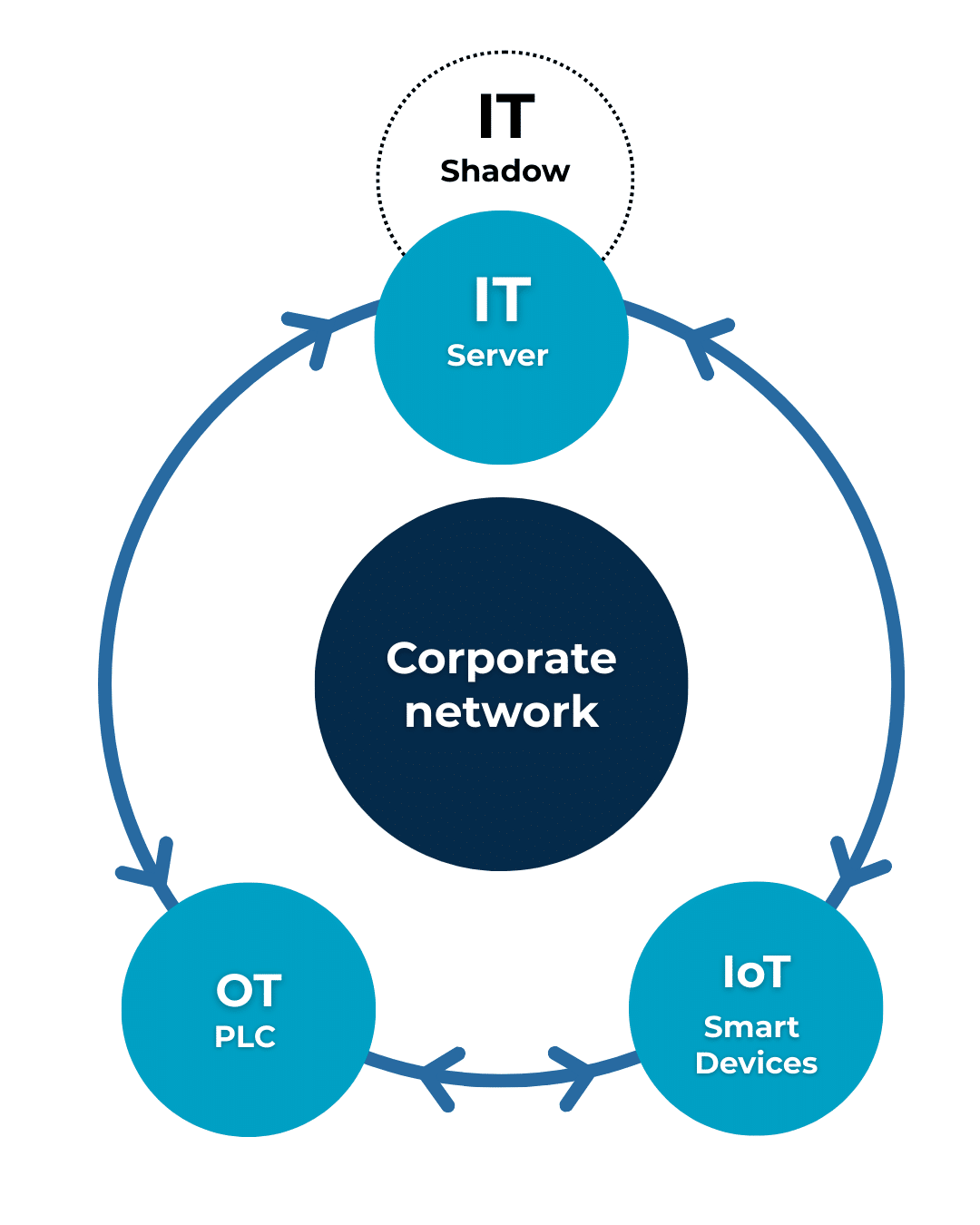

Added to this transformation is a second, highly significant phenomenon: the convergence of IT and OT. Corporate information systems increasingly communicate with industrial machinery and control systems, such as PLCs that govern production lines, while production data is sent to cloud platforms for analysis. This integration creates a much broader technological surface than ever before.

At the same time, a huge number of IoT devices have become widespread, with environmental sensors, monitoring systems, and connected equipment introducing hundreds or thousands of new nodes into the network. These systems often use protocols very different from traditional ones, such as Modbus or OPC UA in OT environments, or the lightweight protocols designed for resource-constrained devices that have become widespread in the IoT world. Consequently, traditional discovery tools, designed to analyze standard TCP/IP traffic, are only able to intercept a portion of the communications actually taking place on the network.

Added to all this is a phenomenon many organizations are familiar with: the proliferation of systems that arise outside of formal IT management processes. A marketing team activates a SaaS service to manage a campaign, a group of developers opens a temporary cloud account for a project, a consultant connects their laptop to the company network during an operational intervention. All these elements contribute to fueling the infamous Shadow IT, which has recently been joined by Shadow AI, against which the technical countermeasures available today are often still partial or insufficient.

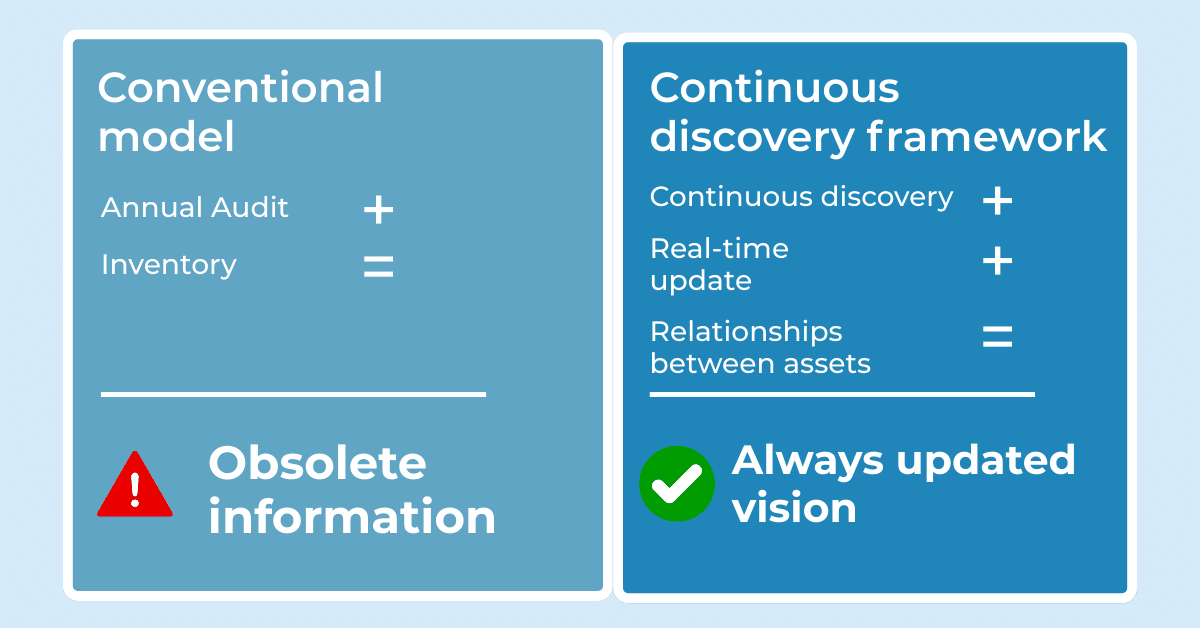

Many organizations continue to manage asset inventory through manual processes, often supported by spreadsheets or periodically updated documentation systems. This approach entails significant operational costs, which often remain hidden within the daily activities of IT teams. Maintaining a manual inventory requires constant commitment from those involved, and every change to the infrastructure must be recorded, verified, and integrated into existing documentation.

If you try to translate this work into organizational effort, it quickly becomes clear how burdensome it is, with several hours of work each week distributed among system administrators, security managers, and infrastructure management teams.

Beyond the issue of operational costs, there is also the issue of regulatory compliance. Many security frameworks and standards require clear and up-to-date visibility into infrastructure assets, and regulations such as GDPR, NIS2, or standards like ISO 27001 require organizations to be able to demonstrate which systems they manage and which security controls are applied. When infrastructure data is out of date or incomplete, it becomes difficult to reliably demonstrate compliance with requirements.

Finally, there is a dimension closely tied to security. An asset that isn’t listed in the inventory tends to be left out of many other operational activities, such as updates, monitoring, and vulnerability management. Under these conditions, a neglected system can easily become an entry point for an attacker, simply because no one is aware of its presence.

Faced with this complexity, many organizations are beginning to rethink how they approach asset discovery.

The most significant change involves the shift from a static snapshot to a continuous observation approach. Instead of performing periodic checks on the infrastructure, the most modern platforms constantly monitor the network and automatically detect the emergence of new systems or changes in communication between assets. This approach is often referred to as continuous discovery, where the infrastructure is viewed as a dynamic system in which data is progressively updated as components interact with each other.

System integrations also play an important role. Through dedicated APIs and connectors, it becomes possible to connect different platforms such as CMDBs, EDR solutions, firewalls, and cloud providers, so that each system contributes to the overall view of the infrastructure.

When this data is aggregated, a further step becomes necessary: information reconciliation. The same assets can be described differently depending on the data source, which is why infrastructure orchestration and management tools allow this information to be standardized and a consistent representation of the technological environment to be built.

In this scenario, traditional IT management tools and those developed for cybersecurity begin to complement each other, contributing together to the creation of a more reliable view of the infrastructure.

The idea of a perfect inventory has always held great appeal for those managing complex information systems, as the prospect of having a constantly updated map of the infrastructure represents a form of total control over the technological environment.

Operational experience, however, demonstrates, as we have seen, that this objective is unrealistic in a context characterized by dynamic infrastructures, heterogeneous levels of obsolescence, and increasing integration between different technological worlds. For this reason, many organizations are adopting a different paradigm, focusing on building systems capable of adapting to infrastructure changes and transforming the inventory from a static document into a living representation of the technological environment.

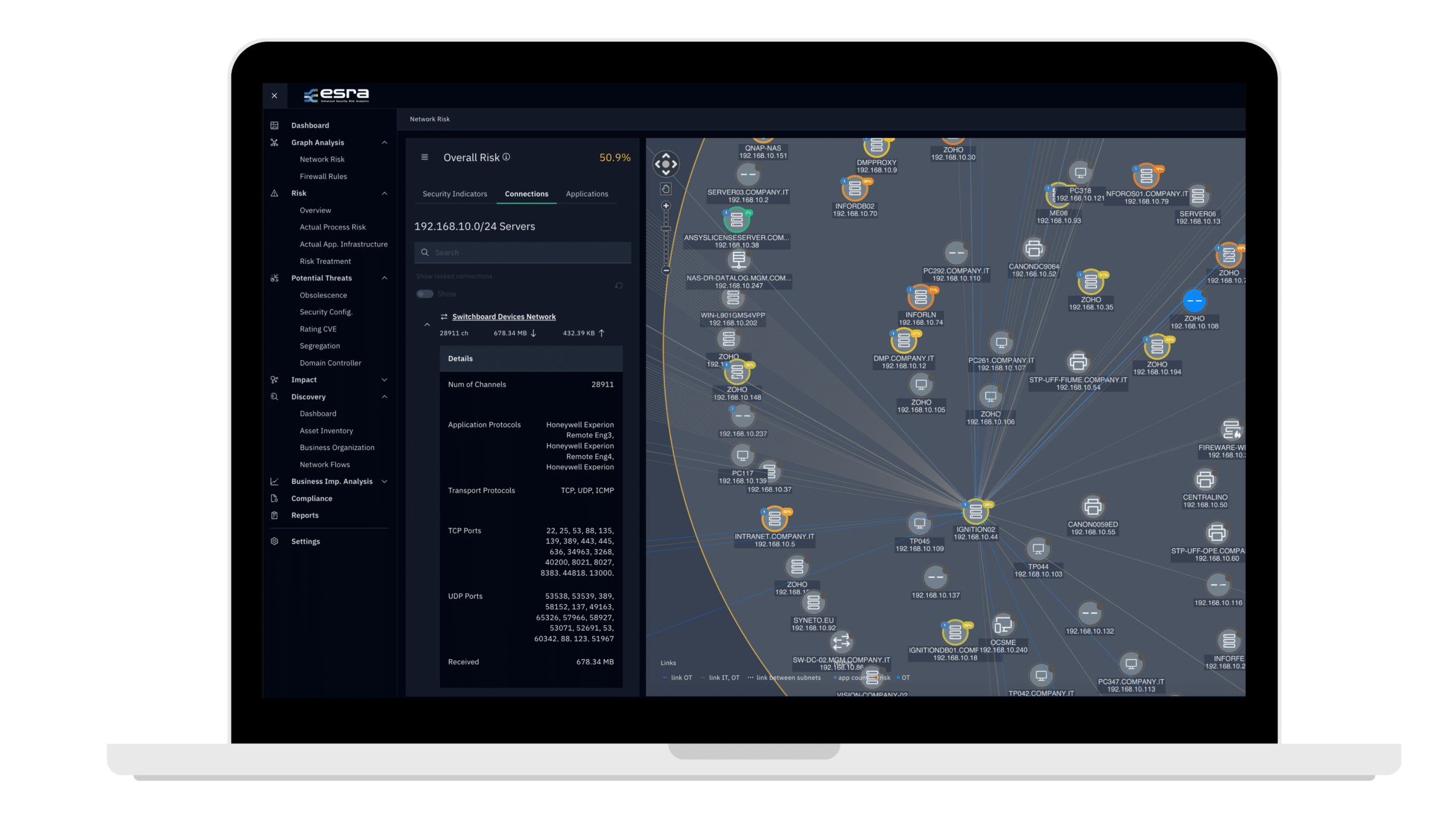

ai.esra integrates this approach into a broader risk analysis process, automatically detecting assets within the perimeter and reconstructing network communications between different systems to generate a digital twin of the infrastructure that dynamically and continuously reflects the real state of the environment. This representation allows us to understand how systems interact with each other and how potential threats can propagate along existing connections, even in heterogeneous infrastructures where IT systems coexist with OT components and IoT devices. Viewing the infrastructure as an interconnected and constantly evolving system is what transforms the asset inventory from a simple technical log to a fundamental tool for understanding the security of the entire infrastructure.

The ability to understand and maintain asset inventory is even more important today in light of the NIS2 Directive, which requires organizations to have a clear vision of the systems that make up their digital infrastructure and their interdependencies. To support companies in this compliance process, ai.esra has developed NIS2do, a tool designed to help organizations prioritize action and maintain a sustainable compliance model over time.

ai.esra SpA – strada del Lionetto 6 Torino, Italy, 10146

Tel +39 011 234 4611

CAP. SOC. € 50.000,00 i.v. – REA TO1339590 CF e PI 13107650015

“This website is committed to ensuring digital accessibility in accordance with European regulations (EAA). To report accessibility issues, please write to: ai.esra@ai-esra.com”

ai.esra SpA – strada del Lionetto 6 Torino, Italy, 10146

Tel +39 011 234 4611

CAP. SOC. € 50.000,00 i.v. – REA TO1339590

CF e PI 13107650015

© 2024 Esra – All Rights Reserved